Explaining Google Maps to my mom, she asked if it could just speak. That’s exactly what Multimodal AI does. Discover the latest trends in AI shaping our future!

Have you watched the movie Iron Man? Wonderful. Then you must have surely come across J.A.R.V.I.S. (Tony Stark’s AI companion) which had multimodal capabilities – it could process voice commands, recognize images, analyze surroundings, and even control machines. We’re not exactly at J.A.R.V.I.S. level yet, but thanks to rapid AI advancement and progress in machine learning, we are getting closer. This kind is known as Multimodal AI.

This AI can see a picture, read a caption, hear a voice, and make sense of it all together – just like we do. And with emerging technologies pushing the boundaries, multimodal AI is finding real-world applications in fields like Robotics and Automation, revolutionizing everything from self-driving cars to virtual assistants.

And guess what? It’s not just some fancy term for tech nerds sitting in air-conditioned offices. It’s already everywhere – from your YouTube recommendations to self-checkout kiosks at malls and even in the way your phone suggests emojis based on what you type (yes, it knows when you’re feeling filmy).

So, what’s new in this multimodal AI game? Well, sit back with your snacks because we’re about to break it down!

What Is Multimodal AI?

And How it Works?

In simple terms, multimodal AI is the resemblance of that multi-talented guide on a trip – who can speak English, Hindi, Punjabi and even crack jokes in Tamil. It can process and respond to different types of information simultaneously – be it text, voice, images, or videos. Unlike earlier AI models that would get confused when switching between various input types, multimodal AI can seamlessly juggle all of them at once.

For example:

♦ You can ask your voice assistant, “Show me the best dosa places near me. ” It won’t just read out a list – it will show photos, reviews and even tell you which one is the closest.

♦ You upload a blurry photo of a medicine strip on an app, and AI identifies the name and reads out the instructions.

♦ When you hum a song’s tune on your phone, it identifies the track and artist and suggests a playlist. Try and check SoundHound.

Sounds like sorcery, right? But it’s not – it’s AI finally understanding us the way we understand the world.

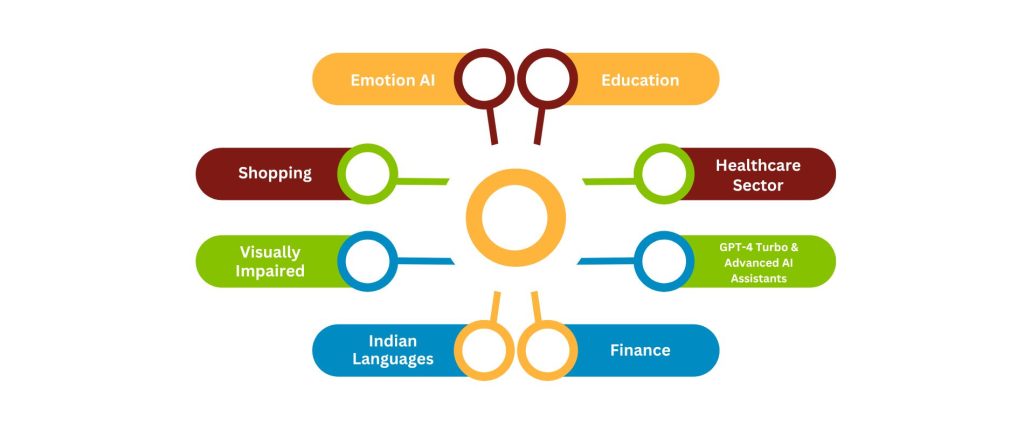

Applications of Multimodal AI Models

Latest Breakthroughs!

Multimodal AI is advancing at full speed, and some recent developments are straight out of a sci-fi movie. Here are some of the latest breakthroughs:

1. GPT-4 Turbo & Advanced AI Assistants

OpenAI, the company behind ChatGPT, has introduced more innovative and multimodal models. This means you can now show an AI a picture of your fridge’s contents and ask, “What can I cook with this?” It will actually give you a recipe! Handy for digging out those forgotten hidden-gems or experimenting with new, innovative recipes.

2. Emotion AI

Also known as Affective Computing, this AI application can detect emotions from voice, text, and facial expressions.

Emotion AI powers:

♦ Virtual assistants that sense frustration in your voice and adjust their tone.

♦ Customer service bots that detect dissatisfaction and offer better solutions.

For example, Hume AI. It develops empathic models that analyze voice, text, and visuals to enable emotionally intelligent interactions. Its eLLM and APIs enhance communication by recognizing and responding to human emotions.

3. AI in Indian Languages

AI is learning to adapt to a country as diverse as India, where every state has its own way of speaking. New models can now understand Hinglish (interesting, isn’t it?), regional dialects, and even grasp context behind specific phrases.

Why does it matter? AI in Indian languages makes technology more accessible, helping in:

♦ Customer Support

♦ Education

♦ Digital Services

This bridges the language gap for millions across India.

4. AI for the Visually Impaired

Tech companies are working on AI tools that describe surroundings to visually impaired users.

Try imagining:

♦ Pointing your phone at a restaurant menu, and the AI reads out the options.

♦ An app that narrates what’s happening around you in real-time – almost like a personal guide.

For example, Seeing AI, Lookout, Be My Eyes.

5. Multimodal AI in Shopping

I’m sure you’ve used this already. You must have clicked a picture of a dress or taken a screenshot of a product and looked for it on Google Lens. Now, even apps are integrating AI that lets you snap pictures and find similar products online – like Myntra, Flipkart, Amazon’s StyleSnap, and more.

6. Multimodal AI in Healthcare Sector

A great example of Multimodal AI in healthcare is Google DeepMind’s AlphaFold. It combines different data sources, like protein structures and genetic sequences, to predict how proteins fold. This breakthrough has significantly accelerated drug discovery and disease research.

♦ AI combines medical imaging, patient history, and genetic data for early disease detection.

♦ Analyzing MRI scans, blood tests, and lifestyle factors helps tailor treatments.

♦ AI enhances X-rays, MRIs, and CT scans for precise anomaly detection.

♦ Smart wearables and biosensors track real-time health data.

♦ AI integrates clinical trials and molecular data to speed up research.

♦ AI-driven transcription tools simplify documentation.

How Will This Change Everyday Life?

With AI becoming more multimodal, everyday tasks will become easier and more intuitive. Here’s what’s in store:

♦ Easier Communication: Language barriers? Gone. AI will translate, transcribe, and even mimic accents to help people communicate better.

♦ More Natural Interactions: You’ll no longer have to use robotic commands with your voice assistants. Talk the way you usually would, and AI will catch on.

♦ Better Accessibility: From helping the disabled to assisting elders with the newest technology in AI, it will become a real helping hand (without having to explain things multiple times!).

♦ Smarter Homes & Cities: Imagine AI-powered street signs that guide people in different languages or smart homes that respond to voice, gestures, and expressions.

So, What’s Next?

Excited for what’s coming next?

Are you excited about what’s coming next? I mean, we may or may not make any anticipations. But there’s one thing I’m sure about AI that it’s never going to stop evolving. Multimodal AI is just getting started, and the way things are shaping up, we’re heading towards even more seamless interactions between humans and machines. We can clearly expect smarter virtual assistants, and tech that feels almost… human.

So, guys! Keep your eyes on the road because things will only get more exciting. Now it’s on us to make the most of it. AI is evolving fast, and it’s time to be part of the revolution. It’s all about making AI more intuitive, creative, and, dare we say, a little more fun. Whether you’re a business looking to integrate AI, a tech enthusiast, or just someone curious about what’s next – we’d love to hear your thoughts!

If you’re curious to know more, check out our blog on Holi events in Delhi for all the exciting details – Holi Events in Delhi 2025: Top Venues and Parties